Is it possible for AI powered chatbots to replace Psychotherapy? In recent years, therapy has switched from physical meetings to online sessions, which leaves the question whether it is possible to get the same quality mental health help from a chatbot.

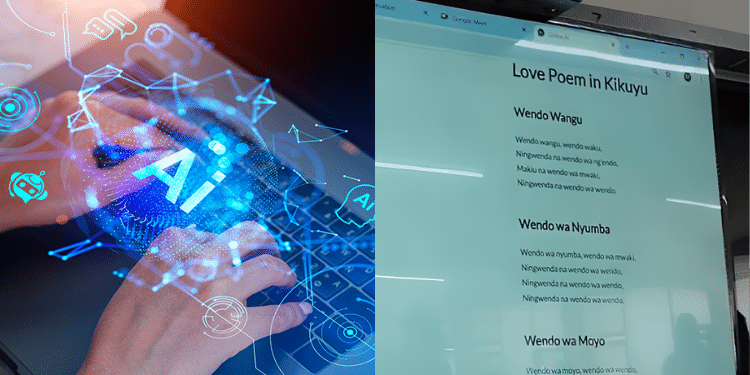

A good example is ChatGPT, which is a large language model developed by OpenAI, that uses machine learning algorithms to understand natural language and generate human-like responses to questions and statements.

Since its launch in November 2022, the OpenAI developed chatbot had gathered over 100 million users by January 2023.

A number of mental health websites are currently using chatbots to set appointments, answer FAQs about services and policies, and store customer data for later convenience.

ChatGPT has enhanced language ability to deal with human interaction which means to some extent, could be used as an alternative to a human therapist when one needs to pour out mental health issues.

It is one thing to use an AI powered chatbot to draft an article but it an entirely different case to seek psychological related help from such as ChatGPT.

“As an AI language model, I lack the empathy, intuition, and emotional intelligence required to provide therapy effectively. While I have been trained on a vast amount of text data from the internet, including information related to mental health, therapy involves a deeper level of emotional understanding and personalized guidance that goes beyond providing information. Therapy requires building a trusting relationship between the therapist and the client, which can only be achieved through human interaction.

Additionally, the practice of therapy is regulated and requires extensive training, experience, and licensing to ensure that clients receive effective and ethical treatment. It is important to seek help from a licensed therapist or mental health professional who can provide the necessary support, guidance, and expertise tailored to your individual needs.” ChatGPT responded after being prompted to help with therapy.

This particular response proves that AI can never entirely replace humans when it comes to certain job roles.

Also Read: “ChatGPT Glitch Allowed Some Users to See Other’s Titles” CEO Said

There are several ethical concerns raised when AI is used to offer counsel to a client.

Therapy involves sharing personal and sensitive information with a trained professional, and it is essential to ensure that the information is kept confidential and secure. AI language models can collect and store the information provided during therapy sessions, raising concerns about data privacy and confidentiality.

The capability of an AI language model to deliver individualized and successful therapy raises yet another ethical issue. AI language models lack the empathy and emotional intelligence required to offer tailored and efficient counseling.

In January, behavioral health platform Koko cofounder Robert Morris announced on Twitter that his website provided mental health support to some 4,000 people using ChatGPT-3 in an experiment.

Chatbots can be an effective way to provide additional support and guidance to those who are already receiving therapy and those who may not have access to traditional therapy services.

It is imperative to get assistance from a qualified therapist or mental health specialist who can offer the support, direction, and knowledge specifically suited to your requirements, while also following ethical norms relating to confidentiality, privacy, and high-quality care.